Your buyer types "best project management tool for a 50-person engineering team" into ChatGPT and gets three or four recommendations back. No ads, no blue links, no scrolling. Just a shortlist, ready to go.

That shortlist forms before they ever open Google. If your product isn't in it, you're not in the running. The demo never happens. You never even know you lost it.

That's answer engine optimization (AEO): the work of getting your product into those recommendations when buyers ask AI tools for help in your category.

This guide breaks down what AEO is, what to focus on first, and how to tell if it's actually moving your pipeline.

Answer engine optimization is the practice of structuring your content so AI-powered systems can find it, understand it, and surface it as a direct answer or cited source. Instead of optimizing for clicks on a list of links, you're optimizing to be the answer.

AEO is what decides whether you show up when a buyer asks an AI tool to recommend a solution in your category. If AI tools can't figure out what you do, who you serve, and why you're different, they'll recommend whoever made that easier to parse. Some call this answer engine marketing.

AEO optimizes for citations in AI-generated answers. SEO optimizes for rankings in search results. They share a lot of DNA, but they split in ways that matter when you're deciding where to spend your time.

Here's how they compare across seven dimensions:

The real difference is measurement. SEO gives you rankings and traffic in tools you already use. AEO requires new ways to track citations, mentions, and influence that happen outside your analytics.

AI isn't a traffic channel; it's a decision channel. Buyers use tools like ChatGPT and Gemini to build shortlists before they click through to a website. If your product isn't in those answers, you lose pipeline that you'll never see in your analytics.

ChatGPT hit 900 million weekly active users as of February 2026, per TechCrunch. Gemini reached 750 million monthly active users per Google’s Q4 2025 earnings, also via TechCrunch. These aren't niche tools. Your buyers are already in them, researching vendors and comparing products.

Across 74,000+ sites, AI tools only account for about 0.25% of total website referral traffic, per Ahrefs. Google drives 38.7%. It’s not even close.

SaaS-adjacent verticals run higher (reference sites see ChatGPT at 1.8% of traffic, 7x the average), but it's still a fraction of what traditional search delivers.

So 900 million people are using these tools every week, but barely any of that activity shows up as a visit to your website. That disconnect is the whole point.

ChatGPT referrals converted at 11.4%, compared to 5.3% for Google organic in a global e-commerce study, per Similarweb. Not every study agrees: A large-scale analysis of 973 e-commerce sites found ChatGPT referrals converted lower than organic, per Search Engine Land.

But the strongest lifts consistently show up in high-consideration categories, like B2B, SaaS, and financial services. That makes sense. By the time someone clicks through from an AI recommendation, they've already been told you're a fit. They're not browsing. They're ready to act.

Microsoft calls it the "invisible early-funnel": research happening entirely inside AI conversations that your attribution model can't observe. Shortlists form, options get eliminated, and decisions solidify before your data stack even knows a buyer exists. Your pipeline feels the impact anyway.

When AI Overviews appear on Google, around 83% of searches end without a click, per Similarweb. Every one of those on a category query where you're not cited is a buyer who moved on without you.

Gartner projected that traditional search engine volume would drop 25% by 2026 as AI chatbots replace many queries. Early data suggests that projection is landing close to the mark. A January 2026 Datos/SparkToro report found that Google desktop searches per user fell nearly 20% year-over-year in the US, based on clickstream data from tens of millions of users, per Search Engine Land. Europe saw a much smaller decline of only 2-3%, suggesting the shift is hitting US buyers hardest and fastest.

Buyer research is spreading across more surfaces, and most SaaS marketing teams have no idea whether they're showing up on the new ones.

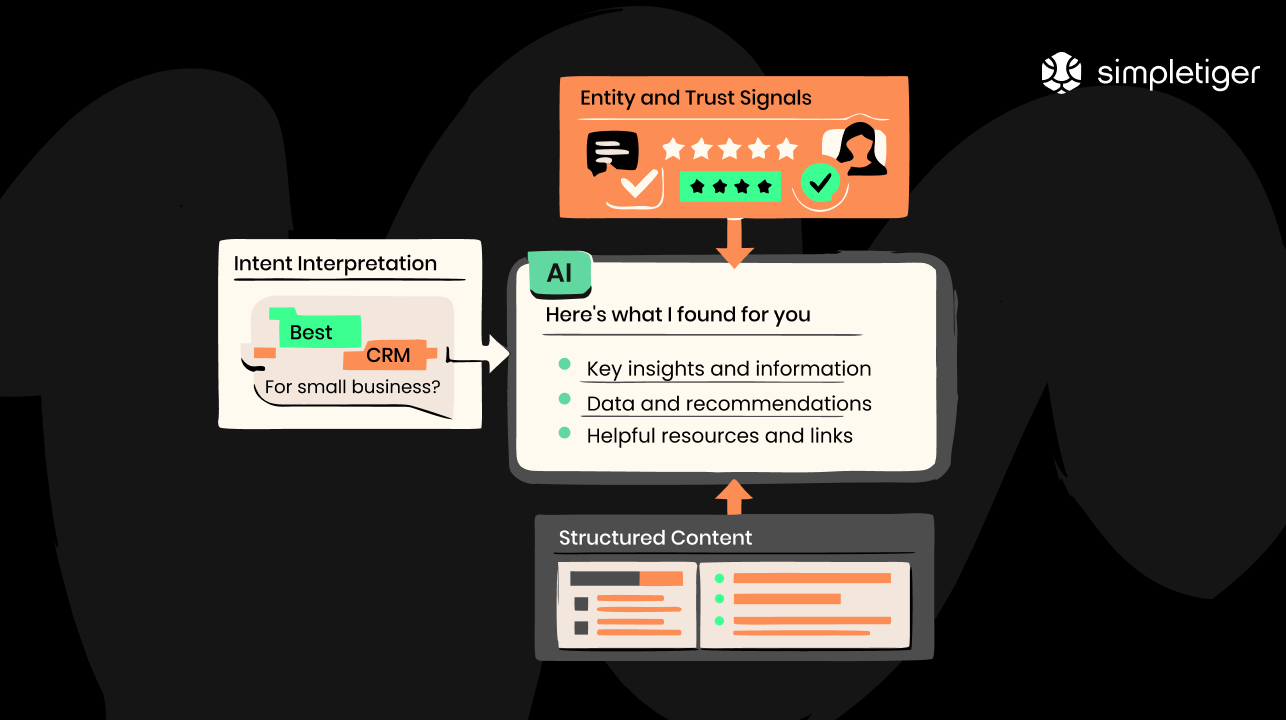

Answer engines pick sources based on three things: intent interpretation, entity authority, and content structure. Unlike Google, which ranks pages, AI answer engines pull from multiple sources and combine them into a single answer. Knowing how they pick helps you influence what they pick.

AI systems don't match keywords. They figure out what you're actually trying to do. "Best CI/CD tool for a growing engineering team" is interpreted as a product recommendation request with constraints on team size and growth stage. Your content needs to answer that intent directly, not just mention the right words.

Answer engines pull from sources they consider authoritative on a topic. That authority comes from consistent brand information across the web, expert authorship, third-party mentions and reviews, and how clearly your content connects your product to what buyers are asking about.

AI crawlers rely on clear heading hierarchies, structured data markup, and content that's already organized into extractable blocks. A 40- to 60-word definition sitting right under a question-format heading is easy for answer engines to pull. A 2,000-word essay where the answer is buried in paragraph twelve? Not so much.

If you've seen these acronyms floating around and thought, "Aren't these all the same thing?", you're mostly right. But there are some differences worth knowing.

The fastest way to see the difference is with an example. Say you sell project management software.

SEO gets you found. AEO gets you understood. GEO gets you trusted. In practice, you need all three working together. Here's a side-by-side breakdown:

Here's SimpleTiger’s take: For most SaaS teams, the practical playbook is about 80% the same, regardless of which acronym you use:

Where the distinction actually matters is in measurement. Across an Ahrefs study of 15,000 prompts, only 12% of URLs cited by AI tools like ChatGPT and Gemini also appear in Google's top 10 for the same query.

Ranking on Google and getting cited by AI are genuinely different outcomes, even if a lot of the work that drives both overlaps.

Each focus area makes your content easier for AI to find, understand, and cite.

Start with the questions your buyers are typing into ChatGPT. Pull from People Also Ask, AnswerThePublic, and your sales team's objection log. Those are the exact prompts prospects are using.

Whether you're writing a blog post, landing page, or comparison guide, lead each section with the question as a heading and a direct 40- to 60-word answer right below it. Then expand with details, examples, and context.

This "answer block" pattern is what AI systems look for when they're building responses. A clear question plus a concise answer is way easier to extract than a wall of text where the answer is buried somewhere in the middle.

AI crawlers and human readers both benefit from the same thing: content that's easy to scan and pull specific information from. Here’s how:

These formats match how answer engines display different types of information, and make your content more likely to get pulled into AI responses.

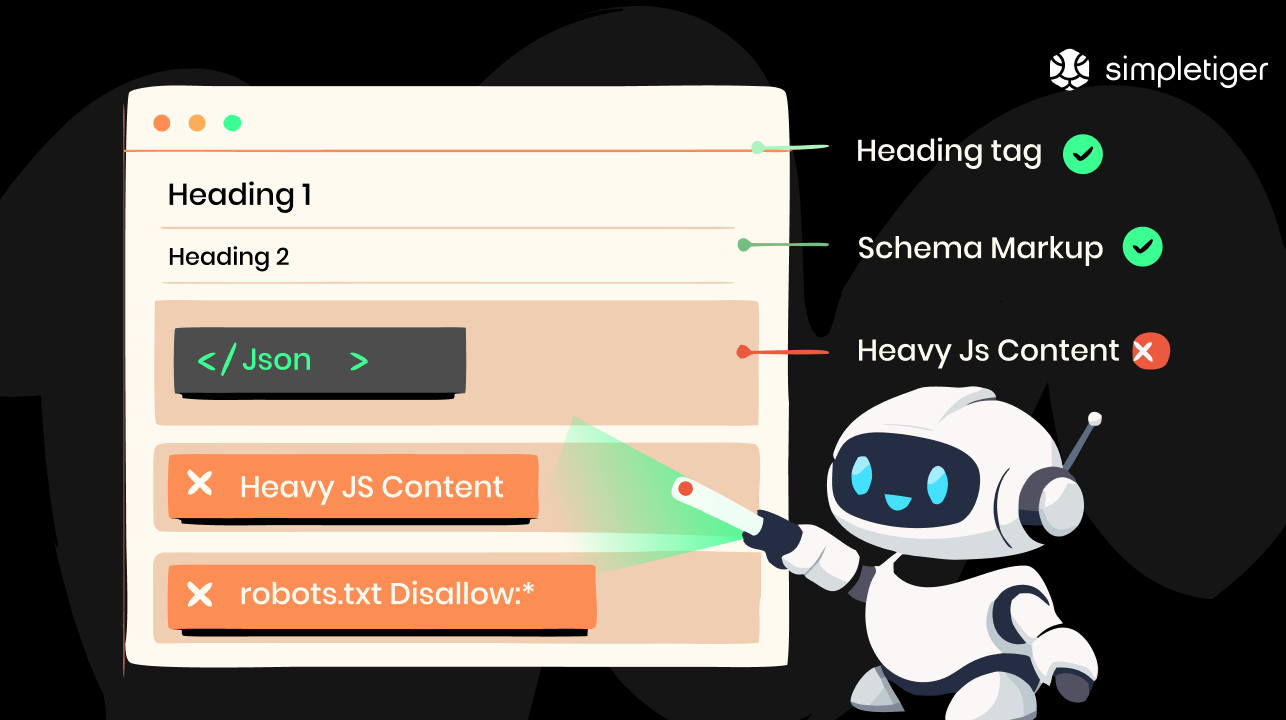

Schema markup helps AI systems understand what your content is about and how it's structured. Five schema types matter most for AEO. Here's when to use each one and what it tells AI crawlers:

Note: Search Engine Land documented that Google reduced FAQ-rich result visibility for most sites. The schema still helps AI parse your content, it just won't generate the visually rich result it used to.

One thing to check: Your schema needs to be in the initial HTML response. AI crawlers don't always execute JavaScript, so if your markup is injected after page load, they won't find it.

AI systems don't just look at your website. They synthesize what the entire internet says about your product and brand. Third-party reviews, expert roundups, community discussions, partner pages, and press mentions all feed into what AI tools repeat.

You can't fully control this, but you can influence it. Get your product into credible comparison content and review sites. Encourage customer reviews on platforms AI tools pull from. Build relationships that lead to genuine expert mentions. The more consistent and positive the signal across multiple sources, the more likely AI tools are to recommend you.

Different AI engines can frame the same brand very differently. Google AI Overviews are 44% more likely than ChatGPT to surface negative sentiment about your brand, per BrightEdge.

This is where the experience, expertise, authoritativeness, trustworthiness (E-E-A-T) model matters. Author bios with real credentials, editorial review processes, citations to primary sources, and consistent brand information across the web all contribute to how AI systems evaluate your authority.

AI systems penalize low-trust language. Unverifiable superlatives like "industry-leading," "best-in-class," or "revolutionary" without backing data actually work against you, per Microsoft Advertising. If you claim it, cite it. If you can't cite it, cut it.

This is where many SaaS teams get stuck. Your content strategy can be perfect, but if AI crawlers can't access or render your pages, none of it matters. Follow this technical AEO readiness checklist to ensure your page is accessible.

At SimpleTiger, technical AEO readiness is a core part of our GEO service for SaaS. We audit and fix these technical issues as part of every SaaS client engagement.

Measurement is where most AEO content gets thin. To justify this investment to your CEO, you need more than "track your citations." The table below provides an overview of the top five metrics that relate to pipeline.

Yes, for most SaaS categories, the directional evidence is strong enough to act on, and the cost of doing nothing is measurable.

NerdWallet reported 37% revenue growth in Q4 2024 despite a 20% decline in monthly unique users. Visits dropped, but the influence on buying decisions didn't.

For SaaS, the parallel is obvious: If a buyer asks an AI tool for recommendations and your competitor gets cited, that's influence that never touches your Google Analytics.

Stack Overflow saw a 14% traffic drop in April 2023, the month after GPT-4 launched, per Similarweb. Developers stopped searching and started asking AI instead.

The same behavioral shift is happening with B2B buyers researching software. If your product isn't in those AI answers, you're not just losing clicks. You're losing the shortlist.

Take your estimated organic traffic from category-level queries. Assume 25% shifts to AI search (that's Gartner's projection). If you're cited in 0% of those AI responses, multiply the lost traffic by your demo conversion rate, then by your average deal value.

An example: 10,000 monthly category visits × 25% shifting to AI = 2,500 AI queries.

At a 0% citation rate, 2% demo conversion, and $25,000 average deal, that's $1.25 million in annual pipeline you’re leaving on the table. Your numbers will be different. But the framework tells you whether AEO deserves a budget conversation.

Nobody has a perfect AEO attribution yet. The entire research phase is invisible to your analytics. But the evidence is strong enough to act on, especially if your competitors are already showing up in AI recommendations and you're not.

Fair warning: This category moves quickly. New tools pop up constantly, existing ones ship features every few weeks, and what's best right now won’t necessarily be best in three months.

This is what the landscape looks like as of this writing.

Citation tracking is where most teams should start.

Content optimization tools help you format content for AI extraction.

Brand monitoring across AI platforms is the final tool.

Don't try to buy all of these at once. Start with citation tracking, so you know where you stand. Then layer in content optimization or brand monitoring based on where your biggest gaps are.

When someone asks ChatGPT for "best SEO agencies for SaaS companies," SimpleTiger shows up as the #1 recommendation. Here's a real result from March 2026:

That's not luck. It's the same work this guide covers:

We do this for ourselves, and we do it for clients through our GEO service for SaaS. Curious where your product stands? We can show you. Book a Discovery Call today.

Run 20–30 prompts like "best [category] for [use case]" across ChatGPT, Gemini, and Perplexity, once a week for a month. Log whether any brand in your space gets cited. If competitors are showing up consistently, your buyers are using AI to research. You're just not in the conversation yet.

Pull prompt ideas from your sales team's objection log and Google's People Also Ask. These are the real questions buyers type into AI tools. If three or more competitors get cited across multiple prompts, the channel is active, and you're losing consideration share by not being there.

The quickest test: disable JavaScript in Chrome DevTools (Cmd+Shift+P, type "Disable JavaScript") and load your top 10 pages. Anything that vanishes is content that answer engines will never process. Fix those pages with server-side rendering or static HTML.

Then check two more things:

There's no reliable timeline. Some AI tools use live retrieval and can cite new content within days. Others depend on training data that updates on its own schedule. The honest answer is that citation appearance is less predictable than Google rankings.

That said, the fundamentals still apply. Pages that already rank well on Google and have strong third-party citations from places like G2, Capterra, or industry publications tend to get picked up faster. Start there. Use question-format content and schema markup. Then track your citation share monthly and look for movement rather than waiting for a specific date.

Add "AI search (ChatGPT, Gemini, Perplexity)" as an option in your "How did you hear about us?" field on demo and trial forms. Train your sales team to ask about it during discovery calls. Tag those records in your CRM as "AI-assisted" so you can track influenced pipeline separately from direct organic.

You can also correlate direct traffic spikes with AI citation appearances by logging your weekly citation audit dates and comparing them to CRM timestamps. This is assisted attribution, not last-click. The goal is to quantify influence, not replace your GA4 tracking.

You don't need to rebuild anything. Start by reformatting your top 10–15 pages to include question-format H2s with direct answer blocks below them. Add FAQPage or HowTo schema. Make sure the content is visible without JavaScript rendering. That's it for round one.

Focus on pages that already rank well or target high-intent queries, like comparisons and implementation guides. Those are where AI citations have the highest pipeline impact.

.avif)

Bella is Content Director at SimpleTiger, responsible for building the strategy, systems, and team that scale content-driven growth for our SaaS clients.